structured counterfactual simulations for ai agents. same seed, different actor, different world.

explore paracosm →

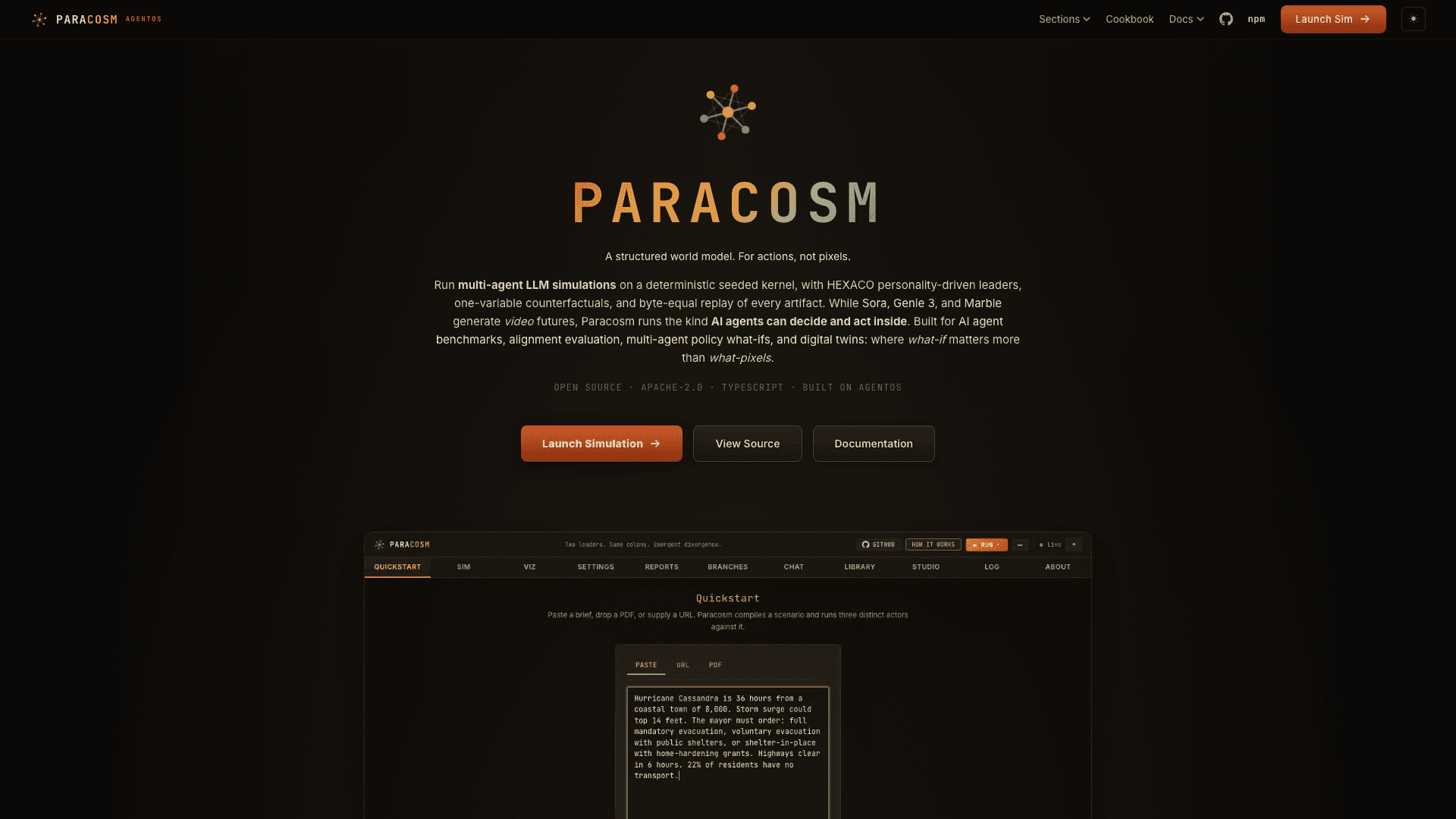

Paracosm is a structured counterfactual world simulator for AI agents. Start from a prompt, brief, URL, or hand-written scenario draft. The compiler grounds it into a typed ScenarioPackage — a JSON contract with five state bags, labels, departments, metrics, setup defaults, and generated hooks. An actor with a HEXACO personality profile runs that world. A deterministic kernel drives state, time, and randomness. An LLM generates events, specialist analyses, and the actor's decisions. Specialists can forge new computational tools at runtime inside a V8 sandbox; an LLM judge approves each forge before it enters the decision pipeline. Personality traits drift. One turn ends, the next begins.

The product is the structural contrast: two runs against an identical seed and compiled world contract produce measurably divergent trajectories when you swap one variable — the actor's personality. The kernel side is reproducible. The divergence comes from the LLM stages reading HEXACO profiles and deciding differently.

Paracosm aligns with the structured world model definition from Xing 2025 and the ACM Computing Surveys 2025 world-model survey: a simulator for actionable possibilities, not a video generator. It is also a counterfactual world simulation model in the sense of Kirfel et al. 2025: a substrate for replaying an event with one variable changed and surfacing the effect. The closest LLM-world-model implementation anchor is Yang et al. 2026, which evaluates LLM-based world models through policy verification, action proposal, and policy planning.

Paracosm takes the safe product version of that idea: externalize the world into schema, citations, tools, snapshots, and seeded transitions, then let the LLM reason over that structure.

- Not a generative visual world model. Sora, Genie 3, and World Labs Marble produce pixels or 3D scenes. Paracosm produces a typed

RunArtifact: metrics, decisions, specialist notes, citations, forged tool summaries.

- Not a JEPA-style predictive-representation model. Yann LeCun's AMI Labs trains neural representations from sensor streams. Paracosm composes a deterministic kernel with an LLM reasoner; no training pipeline.

- Not a multi-agent orchestration framework. LangGraph, AutoGen, CrewAI, OpenAI Agents SDK, and Google ADK build agentic workflows that execute real tasks. Paracosm is a simulation; nothing leaves the run.

- Not a swarm intelligence simulator. MiroFish and OASIS simulate thousands to a million emergent agents for aggregate prediction. Paracosm is top-down (one actor decides), runs ~100 agents by design, and outputs a deterministic trajectory plus divergence across actors.

- Not a generative-agents library. Stanford Generative Agents (Smallville) and Google DeepMind Concordia build emergent social simulacra in open-ended sandboxes. Paracosm ships a deterministic turn loop, personality drift, runtime tool forging, and a universal result schema.

Actors can be colony commanders, CEOs, generals, ship captains, department heads, AI systems, governing councils, or any entity that receives information, evaluates options, and makes choices that shape the world. The simulation does not care what they represent. It cares how they decide.

JSON is the contract, not the product boundary. Today, compileScenario() accepts a scenario JSON draft and grounds it with seedText or seedUrl. The next API layer is a one-call prompt/document wrapper that asks an LLM to propose that same JSON contract, validates it, then compiles and runs it — without ever bypassing the schema, the kernel, or the artifact.

{

"id": "submarine-habitat",

"labels": { "name": "Deep Ocean Habitat", "populationNoun": "crew", "settlementNoun": "habitat" },

"departments": ["Life Support", "Science", "Security", "Logistics"],

"metrics": { "morale": 70, "resources": 60, "stress": 30 },

"setup": { "turnLength": "1 week" }

}

The dashboard's Quickstart tab compiles this brief into a running sim within a minute. A curated library of 10 HEXACO archetypes ships at paracosm/leader-presets for programmatic runBatch sweeps or swap-actor controls in downstream UIs.

import { WorldModel } from 'paracosm/world-model';

const wm = await WorldModel.fromJson(worldJson);

// Run the trunk with per-turn snapshots captured.

const trunk = await wm.simulate(visionaryActor, {

maxTurns: 6, seed: 42, captureSnapshots: true,

});

// Branch at turn 3 with a different actor — no recompute of turns 1-3;

// the forked kernel resumes from the captured state.

const branch = await (await wm.forkFromArtifact(trunk, 3)).simulate(

pragmatistActor,

{ maxTurns: 6, seed: 42 },

);

console.log(branch.metadata.forkedFrom); // { parentRunId, atTurn: 3 }

The kernel round-trips through JSON.stringify, so snapshots persist to disk for replay or audit. In the dashboard, every UI-initiated run captures snapshots; the Reports tab shows a ↳ Fork at Turn N button on each completed turn.

const replay = await wm.replay(storedArtifact);

console.log(replay.matches); // true when kernel produces byte-equal output

console.log(replay.divergence); // first-mismatch JSON pointer when matches=false

Replay is free — LLM stages are not invoked. Use it for regression testing (replay golden artifacts in CI) or forensic comparison (find the first kernel-state divergence between two paracosm versions).

Actor personality drifts as the simulation progresses. A high-Conscientiousness CEO who weathers a crisis grows steadier. A low-Agreeableness general who suffers betrayal grows colder. Drift modulates HEXACO traits within bounded ranges so the actor stays coherent across long runs while reflecting accumulated experience. The drift function is deterministic given the seed, so the path is reproducible even though the LLM-generated decisions are not.

Specialists inside a Paracosm run can forge new computational tools at runtime — a custom risk calculator, a domain-specific cost model, a new metric aggregator. Each forged tool runs inside a V8 sandbox isolate; an LLM judge approves the spec before the tool enters the decision pipeline. The forged tool joins the specialist's permanent toolkit for the remainder of the run, and a summary appears in the RunArtifact for downstream analysis.

| System | Schema-typed world | Deterministic kernel | Counterfactual fork | Output |

|---|

| Paracosm | Yes, ScenarioPackage JSON | Yes, seeded kernel | Yes, WorldModel.fork() | Typed RunArtifact |

| Sora / Genie 3 / Marble | No | No | No | Pixels or 3D scenes |

| JEPA-family | No | Trained representations | No | Latent vectors |

| LangGraph / AutoGen / CrewAI | Workflow nodes | No | No | Tool execution traces |

| Stanford Generative Agents | Emergent town | No | No | Emergent dialogue |

| OASIS / MiroFish | No | Stochastic | No | Aggregate stats |

The combination of typed contracts, deterministic kernel, fork-and-compare, and structured RunArtifact makes Paracosm the substrate for repeatable counterfactual analysis — where Generative Agents are the substrate for emergent social phenomena.

import { WorldModel } from 'paracosm/world-model';

const wm = await WorldModel.fromPrompt({

seedText: 'Q3 board brief: the company must decide between aggressive expansion and capital preservation.',

domainHint: 'corporate strategic decision',

});

const { actors, artifacts } = await wm.quickstart({ actorCount: 3 });

artifacts.forEach((a, i) => console.log(actors[i].name, a.fingerprint));

The dashboard's Quickstart tab takes a paste, PDF, or URL and returns three streaming-live actors with per-card Download JSON, Copy shareable link, and Fork-in-Branches actions.

Paracosm is built on AgentOS, the open-source TypeScript runtime for autonomous AI agents. AgentOS provides the GMI substrate, HEXACO personality vectors, eight-mechanism cognitive memory, multi-agent orchestration, and the V8 sandbox primitives that runtime tool forging depends on. If you want to build your own simulation stack on the same foundations, start with AgentOS.

Paracosm is an open-source TypeScript library for structured counterfactual world simulations. It compiles a scenario into a typed JSON contract, runs it with HEXACO-personality actors and a deterministic kernel, and produces a RunArtifact you can fork, replay, and audit. It is published on npm as paracosm under the Apache-2.0 license.

Sora and Genie 3 generate pixel video or 3D scenes. Paracosm generates structured decisions, metrics, and citations — a RunArtifact you can compare across forks, query by department, and replay byte-equal in CI. Different category of world model, different output shape.

A simulation that replays the same scenario with one variable changed — typically the actor's personality, the seed, or an injected event — and surfaces how the world's trajectory diverges as a result. See Kirfel et al. 2025 for the academic framing. Paracosm operationalizes this through WorldModel.fork().

The actor is the entity that receives information, evaluates options, and makes choices. Actors can be colony commanders, CEOs, generals, ship captains, department heads, AI systems, or any decision-making entity. Each actor carries a HEXACO personality vector that shapes how the LLM reasons through their decisions.

Yes. Paracosm is a standalone npm package — npm install paracosm. It depends on AgentOS, but neither requires the Wilds.ai application. You can build your own dashboards, batch runners, or analysis tools on top of the library.

Yes. Paracosm is Apache-2.0 licensed with the source on GitHub. The dashboard at paracosm.agentos.sh is also open and free to use for the public preview.

Specialists inside a simulation can synthesize new computational tools as the run progresses — a custom calculator, a domain-specific aggregator. Each forged tool runs inside a V8 isolate; an LLM judge approves the spec before the tool joins the pipeline. The forged tool persists for the rest of the run and appears in the RunArtifact.

Every run produces a typed RunArtifact containing per-turn snapshots, the actor's decisions, specialist analyses with citations, forged tool summaries, and a final fingerprint. Use wm.replay(artifact) to deterministically replay it, or wm.forkFromArtifact(artifact, turn) to branch at a specific turn with a different actor or seed.

Paracosm ships as pure ESM with subpath exports (paracosm/world-model, paracosm/compiler, paracosm/digital-twin, paracosm/leader-presets). Node 20+, Bun 1.x, or any TypeScript runner with ESM support resolves them out of the box.

Try the live demo → | Read the docs → | Browse on GitHub → | Install from npm →