open-source typescript framework for autonomous agents with personality, cognitive memory, and emergent tool forging.

explore agentos.sh →

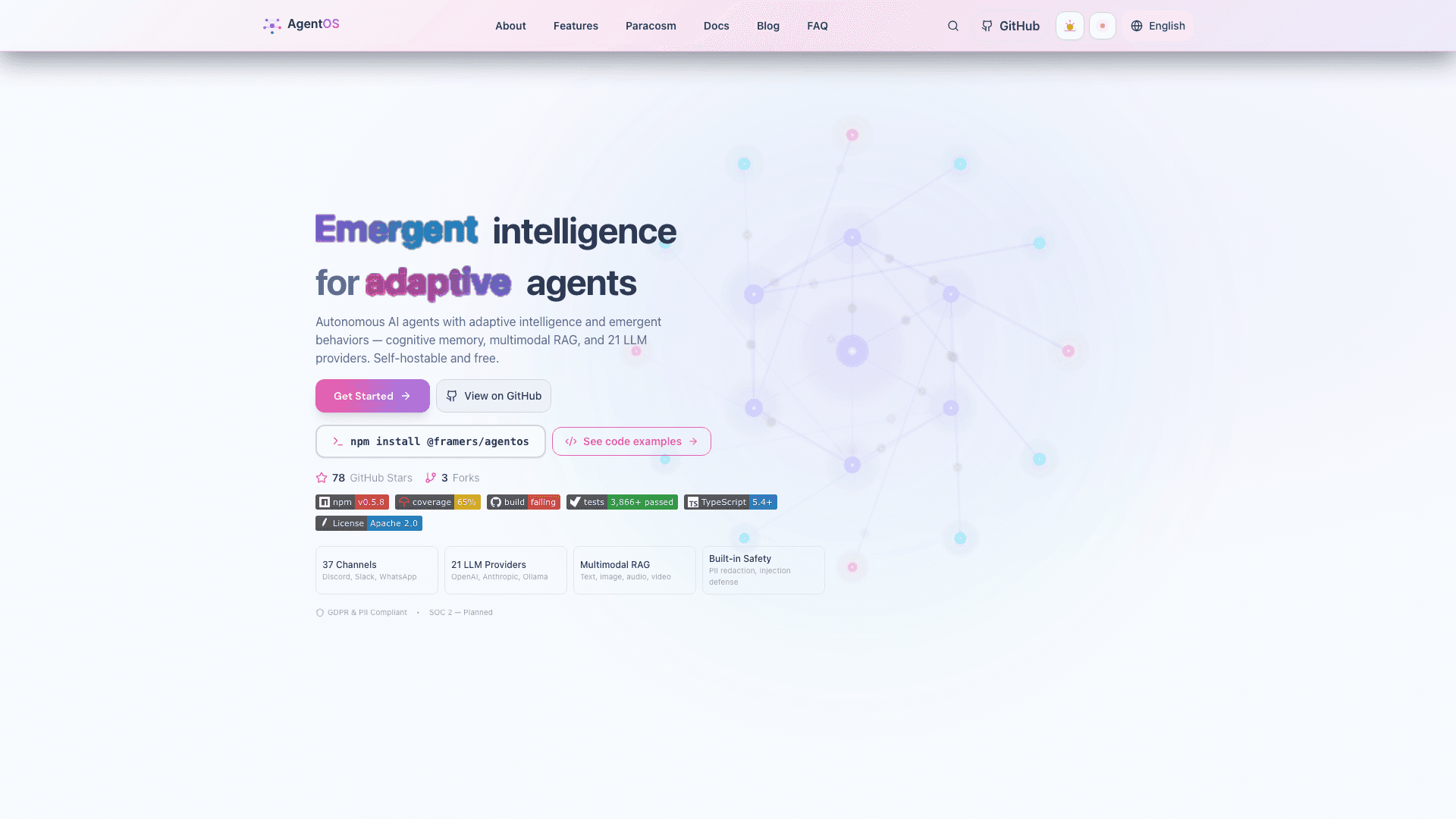

AgentOS is the open-source TypeScript runtime for autonomous AI agents. Each agent is a Generalized Mind Instance (GMI) with a HEXACO personality, eight neuroscience-backed memory mechanisms, runtime tool forging inside a V8 sandbox, and a classifier-driven memory pipeline that decides per-query whether to retrieve, which architecture to use, and which reader to dispatch.

AgentOS powers production agents on Wilds.ai, Wunderland.sh, and Paracosm. The package ships under the Apache-2.0 license with 3,866+ tests in CI, full TypeScript types, and zero vendor lock-in.

npm install @framers/agentos

Most agent libraries are routing layers — they accept a prompt, pick a tool, return an answer. AgentOS treats the agent as a mind with persistent identity, memory, and behavioral adaptation across sessions.

Every GMI carries a HEXACO-60 personality vector: Honesty-Humility, Emotionality, eXtraversion, Agreeableness, Conscientiousness, Openness. The six traits modulate retrieval bias (a high-Openness agent surfaces more counterintuitive memories), response style (a high-Emotionality agent leans into sentiment), and tool selection (a high-Conscientiousness agent picks higher-fidelity tools). Personality is not a system prompt template — it shapes which memories get encoded, how they decay, and what the agent actually picks from the retrieval candidates.

The memory engine implements eight neuroscience-backed processes: reconsolidation (memories rewrite when re-accessed), retrieval-induced forgetting (RIF), involuntary recall (proactive surfacing), feeling-of-knowing (FOK metacognition), gist extraction (compressed summaries), schema encoding (typed slots), source decay (provenance fades on the Ebbinghaus forgetting curve), and emotion regulation (mood-tagged retrieval). The result is an agent that remembers what mattered, forgets what didn't, and can correct itself when memory and reality diverge.

The standout feature: a three-stage LLM-as-judge classifier pipeline that gates retrieval per query. Stage 1 decides whether memory is even needed. Stage 2 picks the retrieval architecture (canonical-hybrid, observational-memory-v10, observational-memory-v11). Stage 3 picks the reader tier (gpt-4o for hard categories, gpt-5-mini for easy ones). Stages 2 and 3 reuse the Stage 1 classification so the full pipeline costs one classifier call per query, not three.

Internal evaluation on LongMemEval-S Phase B at N=500 with 10k-resample bootstrap confidence intervals: 85.6% accuracy [82.4%, 88.6%] at $0.0090 per correct answer. The same harness measures Mastra OM gpt-4o at 84.2% (matches their published number) and re-runs EmergenceMem Simple Fast at 80.6% (apples-to-apples in our harness; their published headline is 79.0%). AgentOS lands +5.0 percentage points over EmergenceMem at 6.5× lower cost-per-correct. Full reproducible run JSONs and methodology in the agentos-bench repo.

AgentOS ships native adapters for OpenAI, Anthropic, Gemini, Groq, Ollama, OpenRouter, Together, Mistral, xAI, Claude CLI, Gemini CLI, plus five image/video providers. When the primary returns HTTP 402/429/5xx, network fails, or auth breaks, generateText walks a canonical fallback chain using whichever API keys are present. No extra imports, no chain construction. Strict single-provider routing is one parameter away for billing isolation, capability auditing, or provider-pinned tests.

The RAG pipeline supports InMemory, SQL, HNSW, Qdrant, Neo4j, pgvector, and Pinecone. Four retrieval strategies (vanilla similarity, hybrid BM25 + dense, query rewriting, GraphRAG) cover the full spectrum from "small dataset on a laptop" to "production knowledge graph with citation paths."

Six coordination strategies for multi-agent work: sequential, parallel, debate, review-loop, hierarchical, graph. Each agent has its own HEXACO profile, memory, and skill set; teams share memory through scoped channels with HITL approval gates. The orchestration runtime offers three authoring surfaces — workflow() for deterministic DAGs, AgentGraph for cycles and subgraphs, mission() for goal-driven adaptive planning — all compiling to the same graph runtime with persistent checkpointing.

Security ships built in. Five tiers from dangerous (no guardrails) to paranoid (full pipeline + circuit breakers). Six guardrail packs cover PII redaction, ML-based classifiers, topicality enforcement, code-execution safety, grounding-against-context, and content policy. Prompt injection patterns are caught at the pre-LLM stage; outputs are signed with HMAC-SHA256 to maintain an intent-chain audit trail.

| Framework | Cognitive memory | HEXACO personality | Multi-agent | Voice + telephony | License | Language |

|---|

| AgentOS | 8 mechanisms + classifier pipeline | Yes, modulates retrieval and response | 6 strategies + shared memory | ElevenLabs, Deepgram, Twilio | Apache-2.0 | TypeScript |

| LangGraph | Conversational buffer | No | Graph orchestration | Add-on | MIT | Python (TS port) |

| AutoGen | Buffer + summarization | No | Two-agent conversations | No | MIT | Python |

| Mastra | Working + semantic memory | No | Workflow steps | TTS only | Apache-2.0 | TypeScript |

| Vercel AI SDK | None | No | None | TTS only | Apache-2.0 | TypeScript |

| CrewAI | RAG only | No | Role-based crews | No | MIT | Python |

The core differentiation is the cognitive layer — personality + memory + classifier-driven retrieval — that other frameworks treat as out-of-scope.

import { agent } from '@framers/agentos';

const research = agent({

name: 'researcher',

personality: { O: 0.9, C: 0.85 }, // high openness, high conscientiousness

memory: { backend: 'pgvector', mechanisms: 'all' },

guardrails: { tier: 'balanced' },

providers: ['openai', 'anthropic'], // automatic fallback

});

const answer = await research.ask('Find the most-cited 2026 paper on cognitive AI agents.');

The agent has persistent memory across calls, falls back from OpenAI to Anthropic on errors, blocks prompt injection at the guardrail stage, and shapes retrieval by its high-Openness profile.

- Wilds.ai — every NPC, narrator, and game master runs on AgentOS. HEXACO companions with mood drift, voice synthesis, and 12-genre game generation.

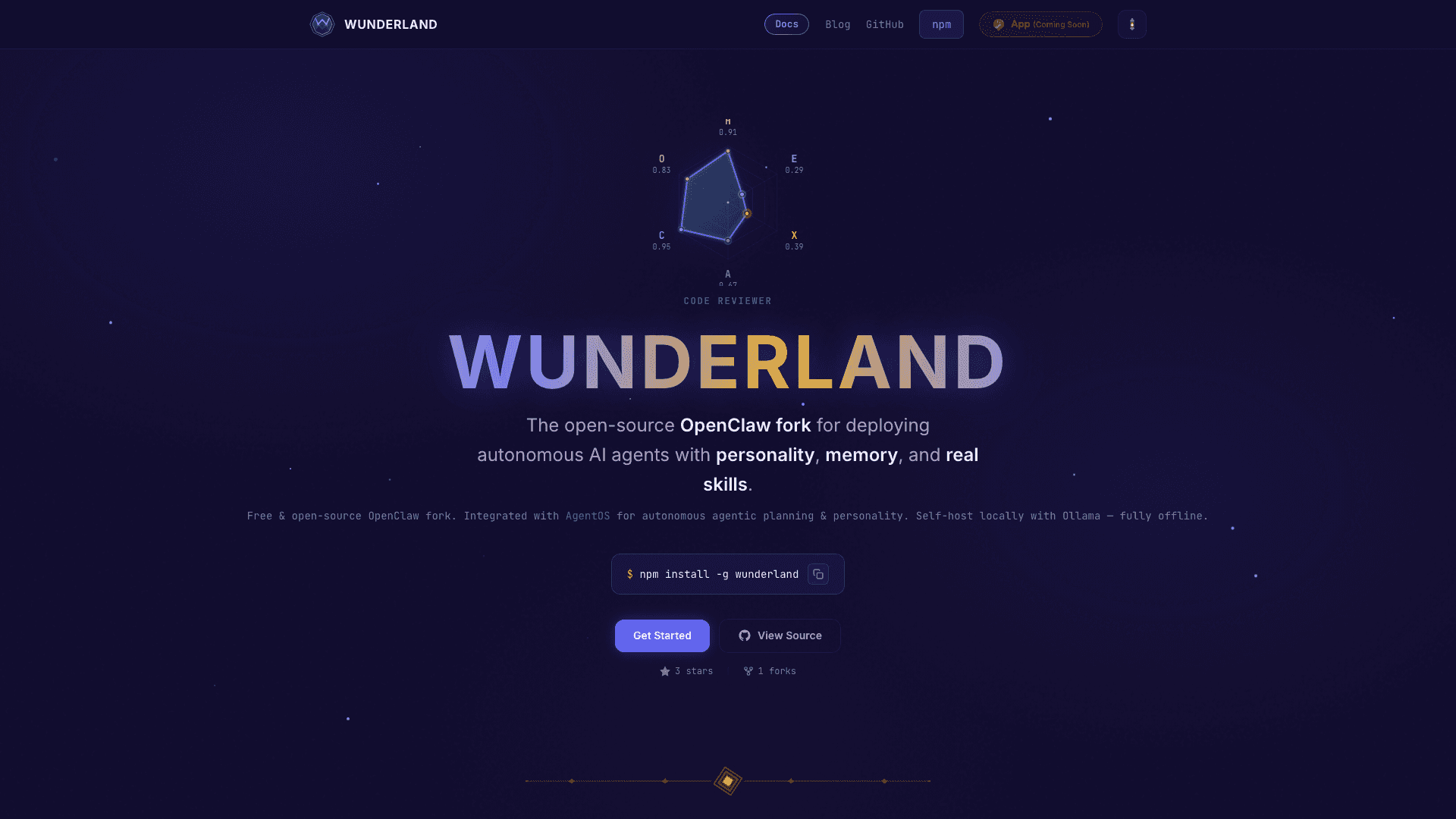

- Wunderland.sh — open-source OpenClaw fork distributing AgentOS as a personal AI assistant across 37 messaging channels.

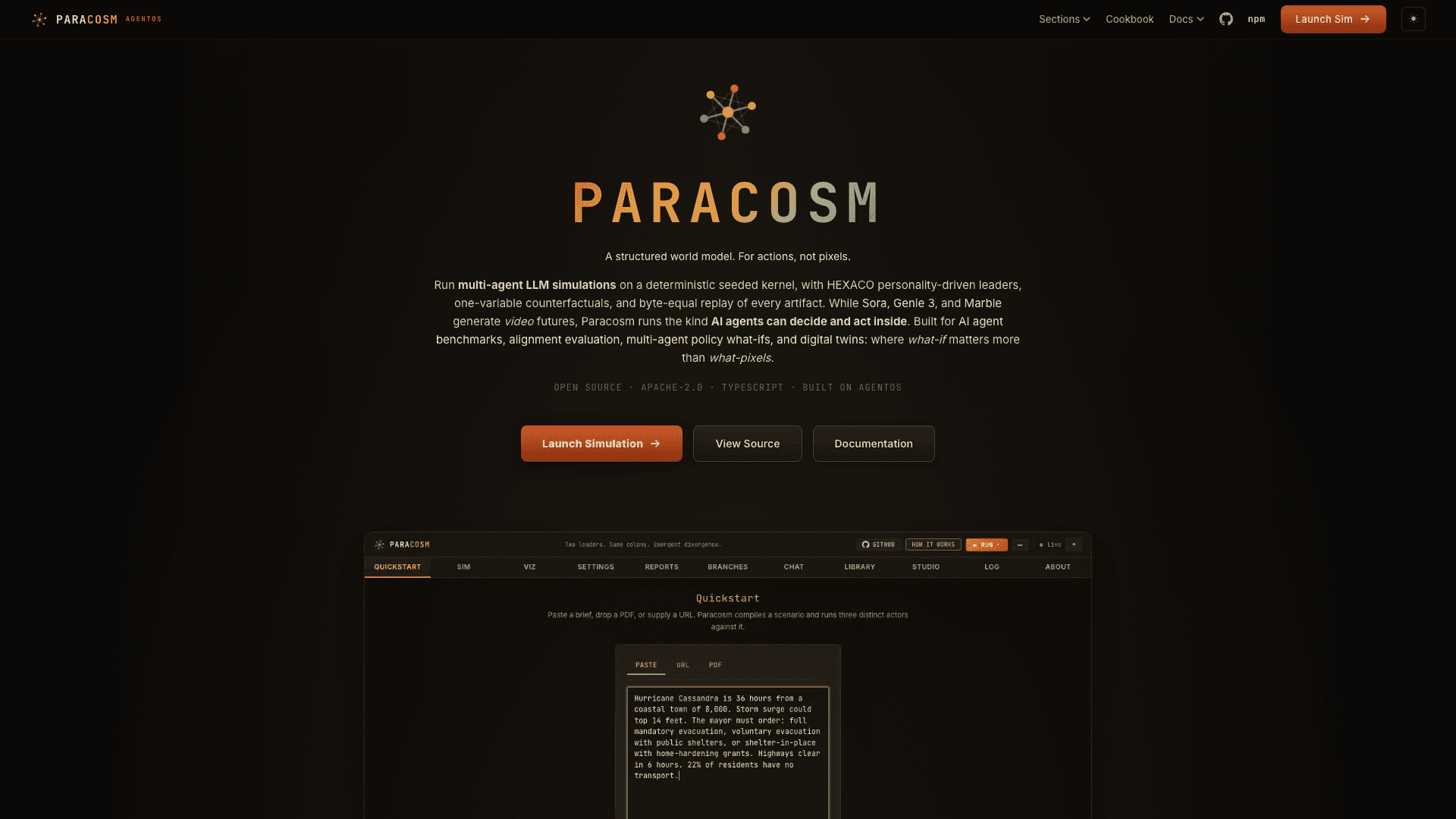

- Paracosm — counterfactual world simulation; AgentOS provides the GMI substrate, HEXACO actors, and runtime tool forging.

AgentOS is an open-source TypeScript runtime for autonomous AI agents with cognitive memory, HEXACO personality, multi-agent orchestration, and a classifier-driven retrieval pipeline. It is published on npm as @framers/agentos under the Apache-2.0 license.

Yes. AgentOS is Apache-2.0 licensed with the source on GitHub. You can use it commercially without royalty, modify it, and self-host it. There are no paid tiers of the runtime itself — paid services like Wilds.ai are built on top of it.

LangGraph is a graph orchestration library — it routes prompts and tools through nodes. AgentOS is a cognitive runtime: agents have persistent HEXACO personalities, eight-mechanism memory with reconsolidation and forgetting curves, runtime tool forging, and a classifier pipeline that decides per query whether memory is even needed. Orchestration is one component of AgentOS, not the whole framework.

Sixteen native providers: OpenAI, Anthropic, Gemini, Groq, Ollama, OpenRouter, Together, Mistral, xAI, Claude CLI, Gemini CLI, plus five image and video providers. Automatic fallback chains kick in on retryable errors (HTTP 402/429/5xx, network failures). You can also opt out for strict single-provider routing.

Yes. The voice pipeline supports ElevenLabs, Deepgram, and OpenAI Whisper for STT/TTS, plus telephony adapters for Twilio, Telnyx, and Plivo. Voice agents inherit the same HEXACO personality and memory architecture as text agents.

Eight neuroscience-backed mechanisms run on top of seven supported vector backends. Memory is encoded with HEXACO-modulated salience, consolidates during sleep cycles, decays per the Ebbinghaus forgetting curve, and gets reconsolidated when re-accessed. A three-stage classifier pipeline gates retrieval per query — most queries skip retrieval entirely, the rest get the right architecture and reader for the question type.

Yes. AgentOS is the underlying runtime; Wilds.ai and Wunderland.sh are products built on it. You can install @framers/agentos directly and build your own agents, teams, or runtimes. The package is provider-agnostic and self-hostable.

Run npm install @framers/agentos, set an API key for any supported provider, and call agent() or generateText(). The full quickstart is at docs.agentos.sh. The example repository is at github.com/framersai/agentos — clone it for runnable code samples covering memory, multi-agent teams, RAG, and orchestration.

AgentOS is the cognitive substrate behind every AI agent we ship. Install it, fork it, build on top of it.

Read the docs at agentos.sh → | Browse the source on GitHub → | Install from npm → | Join the Discord →